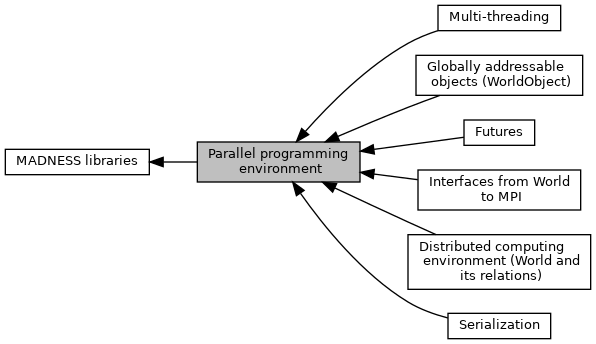

Modules | |

| Distributed computing environment (World and its relations) | |

| Interfaces from World to MPI | |

| Globally addressable objects (WorldObject) | |

| Futures | |

| Serialization | |

| Multi-threading | |

Files | |

| file | distributed_id.h |

| file | MADworld.h |

| This header should include pretty much everything needed for the parallel runtime. | |

| file | range.h |

Implement the Range class for parallel iteration. | |

| file | timers.cc |

| Implementation of functions for timers, etc. | |

| file | timers.h |

| Wrappers around platform dependent timers and performance info. | |

| file | worldtypes.h |

| Defines types used by the parallel runtime. | |

Classes | |

| class | madness::Range< iteratorT > |

| Range, vaguely a la Intel TBB, to encapsulate a random-access, STL-like start and end iterator with chunksize. More... | |

Typedefs | |

| typedef tbb::split | madness::Split |

| Dummy class, a la Intel TBB, used to distinguish splitting constructor. | |

Detailed Description

The MADNESS parallel programming environment combines several successful elements from other models and aims to provide a rich and scalable framework for massively parallel computing while seamlessly integrating with legacy applications and libraries. It includes

- Distributed sparse containers with one-sided access to items, transparent remote method invocation, an owner-computes task model, and optional user control over placement/distribution.

- Distributed objects that can be globally addressed.

- Futures (results of unevaluated expressions) for composition of latency tolerant algorithms and expression of dependencies between tasks.

- Globally accessible task queues in each process which can be used individually or collectively to provide a single global task queue.

- Facile management of computations on processor sub-groups.

- Integration with MPI.

- Active messages to items in a container, distributed objects, and processes.

- Efficient use of multicore processors using pthreads.

The two main early influences for this work were Cilk (Kuszmaul, http://supertech.csail.mit.edu/cilk) and Charm++ (Kale, http://charm.cs.uiuc.edu). Subsequently, ACE (Schmidt, http://www.cs.wustl.edu/~schmidt/ACE.html), STAPL (Rauchwerger and Amato, http://parasol.tamu.edu/groups/rwergergroup/research/stapl), and the HPCS language projects (X10, http://domino.research.ibm.com/comm/research_projects.nsf/pages/x10.index.html ; Chapel, http://chapel.cs.washington.edu ; Fortress, http://fortress.sunsource.net ) and the amazingly talented teams and individuals developing these.

- Introduction to the parallel runtime

The entire parallel environment is encapsulated in an instance of the class madness::World which is instantiated by wrapping an MPI communicator. Multiple worlds may exist, overlap, or be dynamically created and destroyed. Distributed containers (currently associative arrays or hash tables) and distributed objects may be constructed from a world instance.

The recommended approaches to develop scalable and latency tolerant parallel algorithms are either object- or task-centric decompositions rather than the process-centric approach usually forced upon MPI applications. The object-centric approach uses distributed containers (or distributed objects) to store application data. Computation is expressed by sending tasks or messages to objects, using the task queue to automatically manage dependencies expressed via futures. Placement of data and scheduling/placement of computation can be delgated to the container and task queue, unless there are specific performance concerns in which case the application can have full knowledge and control of these.

Items in a container may be accessed largely as if in a standard STL container, but instead of returning an iterator, accessors instead return a madness::Future iterator. A future is a container for the result of a possibly unevaluated expression. In the case of an accessor, if the requested item is local then the result is immediately available. However, if the item is remote, it may take some time before the data is made available locally. You could immediately try to use the future, which would work but with the downside of internally waiting for all of the communication to occur. Much better is to keep on working and only use the future when it is ready.

By far the best way to compute with futures is to pass them as arguments to a new task. Once the futures are ready, the task will be automatically scheduled for execution. A task that produces a result also returns it as a future, so this same mechanism may be used to express dependencies between tasks.

Thus, a very natural expression of a parallel algorithm is as a sequence of dependent tasks. For example, in MADNESS many of the algorithms working on distributed, multidimensional trees start with just a single task working on the root of the tree, with all other processes waiting for something to do. That one task starts recursively (depth or breadth first) traversing the tree and generating new tasks for each node. These in turn generate more tasks on their sub-trees.

The World.am member provides inter-process active message functionality, which is the foundation on which everything else is built. We do not recommend that applications make routine or direct use of inter-process active messages. Instead, try to compose applications using messaging to/between items in distributed containers and the local task queue(s).

The World.mpi member is the preferred way to use MPI since it has a growing amount of instrumentation and debugging capability, though MPI routines may be called directly if necessary. However, MPI is again a low-level model and we do not encourage its direct use. It is there since it is the portable standard for communication and to facilitate integration with legacy applications.

The World.gop member provides global operations that are internally non-blocking, enabling the invoking thread to continue working.

The execution model is sequentially consistent. That is, from the perspective of a single thread of execution, operations on the same local/remote object behave as if executed sequentially in the same order as programmed. This means that performing a read after a write/modify returns the modified value, as expected. Such behavior applies only to the view of a single thread — the execution of multiple threads and active messages from different threads may be interleaved arbitrarily.

- Note

- More information on madness::World can be found in the Distributed computing environment (World and its relations) module.

- Distributed Containers (WorldContainer)

The only currently provided containers are associative arrays or maps that are almost directly equivalent to the STL map or the GNU hash_map. Indeed, the implementation can use either of these for the local storage, though the GNU hash_map is to be preferred for performance reasons and is the only one discussed here.

A map generalizes the concept of an array (which maps an integer index in a dense range to a value) by mapping an arbitrary key to a value. This is a very natural, general and efficient mechanism for storing sparse data structures. The distribution of items in the container between processes is based upon a function which maps the key to a process. There is a default mapping which is essentially a pseudo-random uniform mapping, but the user can provide their own (possibly data-dependent) operator to control the distribution.

The keys and values associated with containers must be serializble by the MADNESS archive mechanism. Please refer to archive.h and documentation therein for more information. In addition, the keys must support both

- testing for equality, either by overloading

==or by specializingstd::equal_to<key_type>, - computing a hash value. See worldhash.h for details.

Here is an example of a key that might be used in an octtree.

- Distributed Objects

Distributed objects (madness::WorldObject) provide all of the communication and other resources necessary to build new distributed capabilities. The distributed container class (madness::WorldContainer) actually inherits most of its functionality from madness::WorldObject.

Typedef Documentation

◆ Split

| typedef tbb::split madness::Split |

Dummy class, a la Intel TBB, used to distinguish splitting constructor.